During the Cold War years, the nuclear arms race between the United States and the Soviet Union was perceived as the greatest threat to the future of humanity.

Every new nuclear weapon produced by one side compelled the other to produce another, further escalating global tensions. With the end of the Cold War, both the arms race and the security dilemma gradually faded from public attention.

After recent developments in the U.S.-Iran war, however, we are rediscovering these concepts in a different context.

In 1942, then U.S. President Franklin D. Roosevelt brought together some of the most accomplished scientists of the era at a facility in Los Alamos for a secret project. The aim was to make the United States the first power in the world to possess a nuclear weapon. The project became known as the Manhattan Project.

Roosevelt died on April 12, 1945, and therefore never saw the project’s concrete outcomes. Nevertheless, the Manhattan Project was successful. The first nuclear detonation took place on July 16, 1945, in the New Mexico desert, only a few months after the Allied victory on the European front.

Just weeks after the successful test, atomic bombs were dropped on Hiroshima and Nagasaki on August 6 and August 9, 1945, respectively. The two bombs caused the deaths of hundreds of thousands of people and rendered both cities uninhabitable for years. These attacks remain, to this day, the only instances in which nuclear weapons have been used during a war.

Perhaps the most disturbing fact about this process was that the team leading the Manhattan Project could not fully predict the consequences of the first nuclear test.

Some scientists expressed serious concerns that the test explosion in the New Mexico desert could ignite the atmosphere and destroy the world. But it was wartime and this possibility was considered an acceptable risk. The test proceeded despite these uncertainties.

The United States’ monopoly on nuclear weapons did not last long. The Soviet nuclear program bore fruit in 1949. The world thus entered a bipolar era defined by two nuclear powers. Most of the world came under the nuclear security umbrellas of these two powers, organized as NATO and the Warsaw Pact.

Between the end of the U.S. nuclear monopoly in 1949 and the end of the Cold War in 1989, much of the world lived in fear of annihilation through a nuclear war between the United States and the Soviet Union.

Schools conducted regular drills in preparation for possible nuclear attacks and millions stockpiled iodine tablets and canned food. The nuclear war doctrine of the period was based on the concept of second-strike capability, which aimed to ensure that even if one actor were destroyed, it could still retaliate and destroy the other side as well.

These fears were therefore well-founded. During this period, the United States, the Soviet Union, and other international actors allocated enormous budgets to expand their nuclear arsenals.

At the same time, scientists who had worked on the Manhattan Project founded the Bulletin of the Atomic Scientists. The group’s most notable initiative was the Doomsday Clock.

The Doomsday Clock was designed as a metaphor representing how close humanity is to global catastrophe. According to the metaphor, as humanity moves closer to destruction, the clock approaches midnight.

During the most critical moments of the Cold War, millions closely followed the clock’s hands. Political crises such as the Cuban Missile Crisis pushed the United States and the Soviet Union into what appeared to be a race to expand their nuclear arsenals.

This tendency among states later came to be described as the nuclear arms race. When one actor continued to arm itself, the other was incentivized to do the same. The rationality of this persistence in expanding nuclear arsenals was debated, yet it was impossible for either side to be fully certain of the other’s intentions.

Experts proposed the idea of a “hotline” enabling direct communication between the White House and the Kremlin, in the hope that such a mechanism could mitigate the destructive potential of this prisoner’s dilemma.

In the following years, tensions between the United States and the Soviet Union gradually declined. The Cold War ended in 1991 with the dissolution of the Soviet Union. As the rigid realist atmosphere of the Cold War dissipated, liberal democratic peace theory quickly gained popularity among intellectual circles.

While only a few years earlier the end of humanity through nuclear war was still considered a realistic possibility, some intellectuals went so far as to argue that the liberal international order represented the end of history.

The world entered a different era; rather than continuing the arms race, countries such as Kazakhstan, Ukraine, Belarus, and South Africa voluntarily relinquished the nuclear arsenals. People largely forgot the Doomsday Clock.

Thirty-five years later, the concept of an arms race is once again being widely discussed, albeit in a different context: the AI arms race. Today, many states invest heavily in artificial intelligence, believing it will provide strategic advantage.

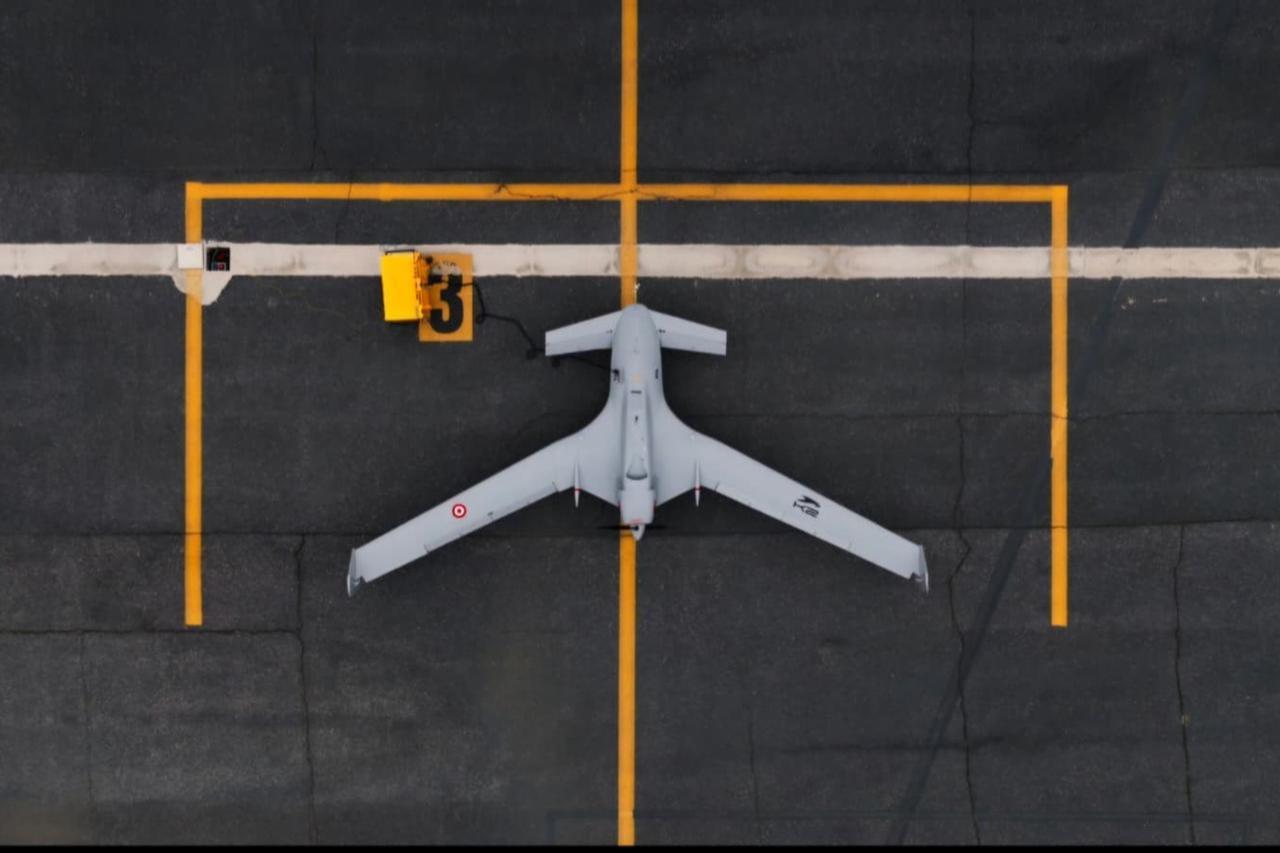

These investments manifest in various forms, ranging from autonomous weapons to big data systems. The ongoing war between the United States and Iran illustrates how artificial intelligence can be used on the battlefield.

Military decision-support systems use artificial intelligence to help decision-makers filter massive volumes of data and extract relevant information. Considering that intelligence today relies less on human intelligence and more on open-source big data as well as visual data such as drone and satellite imagery, it wouldn’t be incorrect to assume that such AI systems are becoming a fundamental component of military intelligence.

Numerous systems, such as the Maven Project initiated by the U.S. Department of Defense in 2017, enable the production of actionable intelligence through machine learning applied to large datasets.

Another prominent AI technology is autonomous weapons. Autonomous weapons are systems that, once activated, can identify targets and initiate attacks without direct human guidance.

The use of artificial intelligence in this manner is ethically highly controversial. Nevertheless, there are strong claims that similar systems were used during U.S. and Israeli interventions in Iran. Among these claims, perhaps the most striking is the allegation that the Minab School Attack, which resulted in the deaths of hundreds of people, many of them children, was carried out due to targeting conducted by an AI-powered military decision-support system.

The AI arms race is not limited to intelligence production or kinetic attacks. Social media platforms, which are now widely used and heavily relied upon, have become a primary source of information for many individuals.

Although propaganda and disinformation have long histories as instruments of psychological warfare, both the capacity of artificial intelligence to generate false information and the echo chambers created by AI-shaped social media algorithms make AI an indispensable tool for disinformation.

Since the early hours of the U.S.–Iran conflict, both sides appear to have used artificial intelligence as an instrument of psychological warfare and disinformation.

A sentence used in an internal IBM training document in 1979 has frequently been recalled in recent years: “A computer can never be held accountable; therefore a computer must never make a managerial decision”.

In a world where autonomous weapons and algorithmic decision-making systems are increasingly likely to replace human decision-makers, this statement appears more like a wish than a realistic principle.

Although the emergence of artificial intelligence as an instrument of war is a disturbing idea for many, we observe a pattern strikingly similar to the nuclear arms race. The strategic advantage provided by artificial intelligence pushes actors within the system to compete in this domain as well.

Moreover, the AI arms race is in many ways more concerning than the nuclear arms race. The destructive nature of nuclear weapons, unlike conventional weapons, created a strong deterrent against their use.

The tendency of nuclear-armed states to avoid direct confrontation was later theorized as nuclear deterrence theory. In the case of artificial intelligence, however, there is as yet no comparable barrier preventing its use on the battlefield.

Another issue is the highly decentralized nature of artificial intelligence technologies. For a long time, the United States and the Soviet Union remained the only two nuclear powers, making mechanisms such as the “hotline” effective in maintaining dialogue between two primary actors. More than 80 years after the first nuclear test, only nine countries are believed to possess nuclear weapons.

In the case of artificial intelligence, however, the picture is entirely different. Unlike nuclear technology, AI is not limited to a small number of state-led projects governed by international institutions.

Rather, it is developed in a decentralized manner by thousands of companies and independent experts. At a time when regulatory frameworks already struggle to keep pace with developments in information technologies, the decentralized nature of AI makes effective oversight even more difficult.

Perhaps the greatest concern in this context is the problem of alignment. In its simplest definition, AI alignment refers to the challenge of designing artificial intelligence systems whose behavior is consistent with human values, intentions, and security interests.

The extent to which the interests of an AI evolving into an autonomous decision-maker will overlap with those of humans remains a subject on which experts have not yet reached consensus.

Closely related to the alignment problem is the unresolved legal question of who would be held accountable for a war crime committed by an AI system acting as a decision-maker.

Just as the scientists leading the Manhattan Project could not fully predict the consequences of the first nuclear detonation, experts today cannot foresee the long-term consequences of autonomous AI technologies. Yet the fear that other actors will continue advancing in this arms race compels all participants within the system to keep moving forward.

The U.S.-Iran war demonstrates that the role of artificial intelligence on the battlefield is no longer merely a theoretical risk. As the use of AI in the security domain increases, the nature of warfare, our understanding of security, and the role played by humanity itself will undergo profound transformations.

In this context, international regulations to limit the use of artificial intelligence in warfare within ethical and legal norms emerge as a necessity.

In 1983, to explain the arms race, the American scientist Carl Sagan used the following metaphor: “Imagine a room awash in gasoline, and there are two implacable enemies in that room. One of them has nine thousand matches, the other seven thousand matches. Each of them is concerned about who’s ahead, who’s stronger”.

If appropriate regulations are not put in place, an AI arms race could push the world into a dangerously similar situation.